I'mSam Muthu

25 years of building enterprise systems. Reinventing the how since late 2025.

🔬 The Experiment

After leading platform transformations at Wells Fargo and serving as CTO at startups, I asked: what happens when you treat AI models as first-class engineering partners — not autocomplete tools? I've been finding out since late 2025.

📊 The Result

I shipped 2 live production products as a solo engineer — CosmicKeys (multi-region, 7 languages, voice narration) and WatchAlgo (AI-generated content at scale) — work that traditionally requires teams of 5-7 engineers. The real skill isn't memorizing syntax. It's knowing what to build, and orchestrating AI to build it right.

Multi-Agent Orchestration

Claude architects, Gemini reviews, Codex executes — in parallel.

Assembly Line Pipelines

1,500+ files generated autonomously with validation and self-repair.

Production at Scale

3 regions, 7 languages, Stripe billing, real users.

Stack Agnostic

Next.js, FastAPI, PostgreSQL, GCP — chosen by need, not habit.

RAG + Enterprise AI

Built retrieval-augmented generation with multi-tenant architecture.

Human-in-the-Loop Safety

Circuit breakers, approval gates, and quality guardrails built in.

The Intellectual Lineage Behind My Products

Three of my shipped projects share one philosophical backbone — borrowed from a thinker I respect deeply.

“It isn't 10,000 hours that creates outliers, it's 10,000 iterations.”

— Naval Ravikant

Naval Ravikant is the biggest influence on how I think about deliberate practice and building your own tools. His 10,000-iterations theory became the backbone for three projects (Segment Loop Master, CosmicKeys, WatchAlgo); his broader thinking on personal sovereignty — own your stack, keep your knowledge yours — shaped a fourth (Mnemos, my open-source local-first personal RAG).

Two threads from one thinker, four projects: three iterate toward mastery, one keeps your knowledge yours.

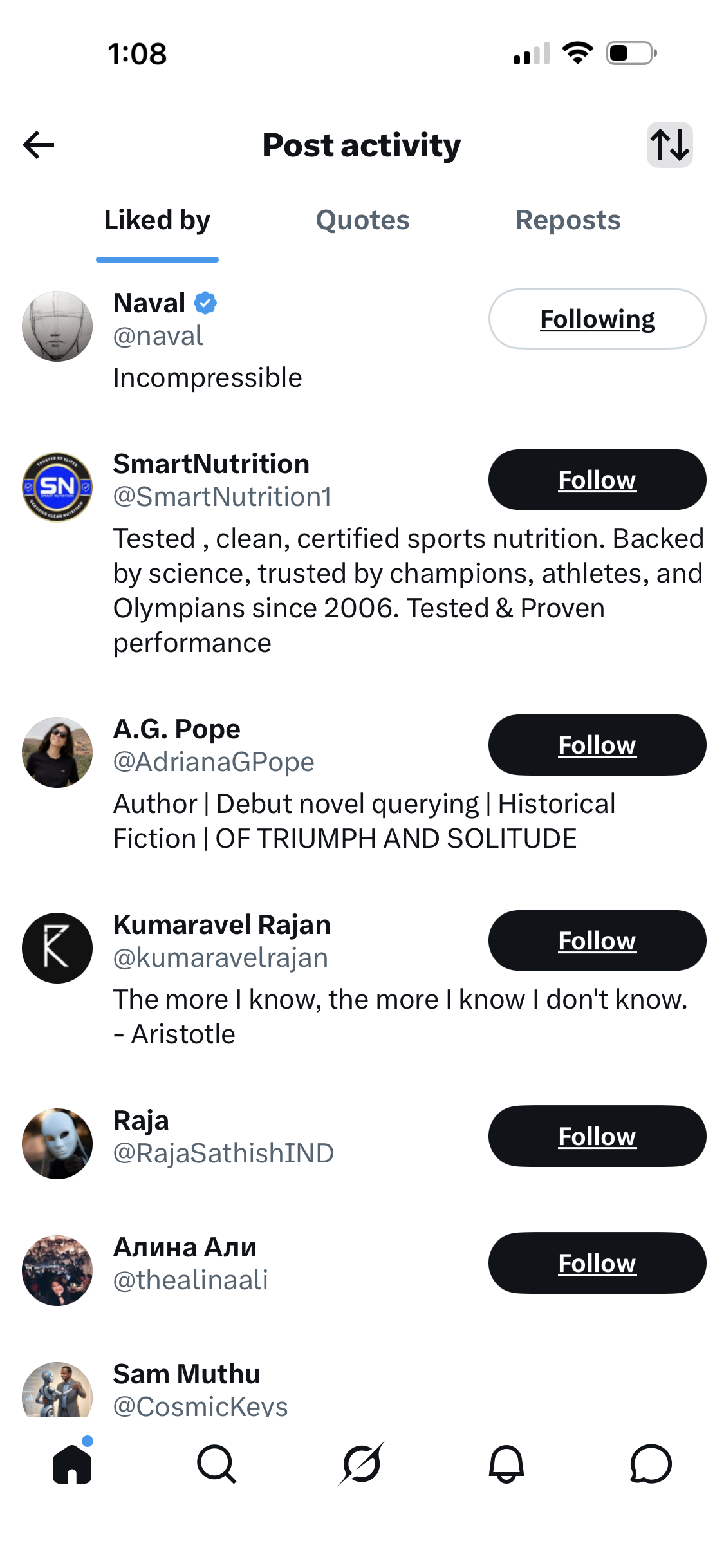

A small tap from the source

The actual sequence — precision matters when the person being talked about might one day read the page. In October 2025, after building Segment Loop Master and publishing its implementation page on this site, I replied to @naval's 10,000-iterations thread with the link — “your 10-word tweet became my personal product requirement.” He liked that reply (presumably after visiting the page). That single tap meaningfully shifted my confidence about the direction. Four months later, with CosmicKeys live in 7 languages and 9 regions, I posted a follow-up to the same thread about it. He didn't engage with that one — Naval doesn't repeat-engage like that, and I wasn't expecting him to. WatchAlgo and Mnemos came after, each on its own page.

Naval doesn't liberally hand out likes. When he engages with a builder, it's signal, not noise.

Small tap, big meaning when it's from the source.

AI-Native Engineering: A Living Index

Architecture Deep Dives

Most engineers use AI as autocomplete. I architect production agentic systems — multi-agent orchestration, RAG pipelines, model routing, and automated quality gates.

AI Factory

Production agentic framework with multi-agent orchestration, RAG pipelines, and Report Cards.

CosmicKeys

Typing platform with voice narration, 7 languages × 9 regions, multi-region anycast routing.

WatchAlgo

AI content factory: 3,247 problems × 3 languages × 3 flavors with automated validation.

Cosmic Managed AI Service

The managed AI service layer for the post-hyperscaler era — vendor-agnostic, infra-first, BYO-everything. 5 pillars + control plane + 4 deployment envelopes.

Mnemos

Personal RAG, 100% local by default. Drop a folder, ask cited questions from your laptop — or from your phone via a private Telegram bot. MIT-licensed, active development (v0.11).

AI Foundations

How I think about LLMs, agents, and production AI — from first principles to shipped systems.

Enterprise RAG Anatomy

Full production RAG pipeline — architectural + sequence diagrams, 15-step walkthrough, naive vs agentic.

Agent Frameworks

LangChain, CrewAI, AutoGen, LlamaIndex, Pydantic AI — honest comparison with code and when to pick each.

No-Code Agent Builder

Flowise — the open-source visual canvas for LLM apps and agents (acquired by Workday). Hands-on series: install, build, inspect the database, and what I'd evolve.

Microsoft AI

Azure AI Foundry, Azure OpenAI, GitHub Copilot, Responsible AI Toolbox. The enterprise stack for organizations anchored on Entra ID, M365, and Azure regional compliance.

Google AI · Vertex AI · Gemini

Vertex AI (Model Garden, Endpoints, Pipelines, Vector Search, Agent Builder) plus Gemini's multimodal 1M+ token context. The GCP-native managed stack.

OpenAI

GPT-4o, GPT-5, o-series reasoning, the Responses API, Realtime API, Codex CLI, Swarm agents. The pace-setter on frontier capability.

Anthropic · Claude · MCP

Claude (Opus, Sonnet, Haiku), Claude Code, MCP, Computer Use, Constitutional AI. The most opinionated take on AI-native software engineering.

MCP Server Pattern

Got a REST API? Here's how to wrap it as an MCP server so AI agents (Claude Desktop, Cursor) consume it natively — and how to build a CLI that uses the same server. Subscription-status example, runnable code.

Model-Agnostic Architecture

Your AI vendor is a dependency, not a destiny. Six layers — provider gateway, model-selection decision matrix, guardrails, multi-tenant isolation, and encryption in transit (mTLS) and at rest.

Observability & Evals

Langfuse, LangSmith, Braintrust, RAGAS, DeepEval, LlamaGuard — how the industry is adopting LLM observability, evaluation, and safety tooling.

Platform Anatomy

Control plane + data plane + guardrails + observability. Vendor-neutral architectural reference with Microsoft, AWS, and custom-stack mappings.

What I Learned by BuildingAn Engineer's Journey Through the AI Transformation

After 25 years building enterprise systems — from Wells Fargo's identity platform to HP's webOS ecosystem to Ericsson's global streaming infrastructure — I realized something: the craft of software engineering has fundamentally changed.

The skills that matter now aren't memorizing APIs or writing boilerplate. They're architectural intuition, problem decomposition, and knowing how to orchestrate AI models to produce reliable, production-quality output. I've been proving this since late 2025 — not by reading papers, but by shipping real products. Every product below was built by one engineer and an LLM Council of AI collaborators.

The Onyx RAG Platform below is one example — a retrieval-augmented generation system with multi-tenant architecture, custom PDF connectors, and semantic search. It's the kind of system enterprises need, and it took weeks instead of quarters to prototype.

🎥 Explore Video Previews

RAG/Onyx Preview Coming Soon

Video preview is being created for this tool

Segment Loop Master10,000 Iterations to Mastery

Inspired by @naval's wisdom "It's actually 10,000 iterations to mastery, not 10,000 hours. And it's not even 10,000 but some unknown number—it's about the number of iterations that drives the learning curve."

Iteration is NOT repetition Repetition is doing the same thing over and over. Iteration is modifying it with a learning and then doing another version—that's error correction. Get 10,000 error corrections in anything, you'll be an expert

Interestingly, Hindu traditions understood this with mantras repeated 108 or 1,008 times—but perhaps what was lost in translation was the iteration aspect. It wasn't just repetition, but conscious refinement with each cycle.

I built this video segment looper for deliberate practice—to master concepts through conscious iteration, not mindless repetition. While I can't release it publicly due to content rights, if you're interested in the concept, reach out. Together we could create a platform where people upload their own content for iterative learning.

Technical Mastery Hub17 Learning Paths • 5 Domains • Infinite Possibilities

From ZenAlgo to Cloud Architecture. From AI Development to DevOps Excellence.

I'm building the technical learning platform I wish existed—where complex concepts become crystal clear through interactive visualizations, real-world projects, and battle-tested patterns.

Master the art of scaling from 1K to 1 Billion users with proven DevOps strategies

Currently live: Onyx RAG Prototype showcasing enterprise AI capabilities. Coming soon: Deep dives into AWS, MCP Servers, Claude Code mastery, and more.

The Truth About AI AgentsProperly architected, they're 10-100x. Used as autocomplete, they're 2-3x.

📐 Spec-First, Not Prompt-First

Most engineers treat AI like autocomplete. The ones who get real leverage treat it like an architectural collaborator. Before I ask an agent to build anything, I brainstorm the spec with it — modularization, multi-tenancy, failure modes, observability. Only after the spec is solid does implementation happen. The quality of the output is bounded by the quality of the spec, not the cleverness of the prompt.

📝 Context Compounds

Context is the most undervalued resource in AI development. I have the agent document its own context as it works — decisions, issues hit, dead ends, rationale. Every future interaction inherits that map. After a month in the same codebase, the same agent is exponentially more effective than on day one because the context compounds. Most teams throw this away every conversation.

📊 The Three Tiers Are Real

Everyone claims 10x productivity from AI now. Most are measuring against themselves pre-Copilot. The reality is tiered:

- Tier 1Autocomplete — Copilot, Cursor inline suggestions. 2-3x over traditional.

- Tier 2Vibe coding — “build me this” prompts, accept output, debug reactively. 5-10x — but fragile and undifferentiated.

- Tier 3Spec-driven AI-native — architect with AI, agent orchestration, evaluation frameworks, cost-aware routing. Another 10x on top of Tier 2

The gap between Tier 2 and Tier 3 isn't another tool or a better prompt. It's architectural thinking that can't be copied from a tutorial. See how I think about it →

The limit isn't the model's intelligence. It's the clarity of your specification.

Your Vision, My ExpertiseChoose Your Path to Collaboration

Looking to hire world-class engineering talent? I'm ready to bring 25 years of platform experience and AI-native engineering practice to your team.

For Employers

Looking for a Distinguished Engineer, Architect, or Staff Engineer who can transform your technology vision into reality

View Interactive ResumeWant more details? Ask ChatGPT about me. It knows a few things 😉